Russia removes US, UK, Japan flags from rocket carrying OneWeb satellites but keeps Indian flag, puts conditions for the launch On a space rocket, a video...

1. India JONATHAN Jonathan Amaral $47,042.43 PLAYERUNKNOWN’S BATTLEGROUNDS Mobile $47,042.43 100.00% 2. India Neyooooo Suraj Majumdar $46,639.23 PLAYERUNKNOWN’S BATTLEGROUNDS Mobile $46,639.23 100.00% 3. India Ghatak Abhijeet...

, an NGO that focuses on socio-economic development, has asked the government to restrict BGMI (Battlegrounds Mobile India), alleging that it poses a threat to India’s...

A 16-year old has been jailed for five years over a “terrorist plot” to blow up a Federal Security Services (FSB) building in Minecraft. The Siberian teenager...

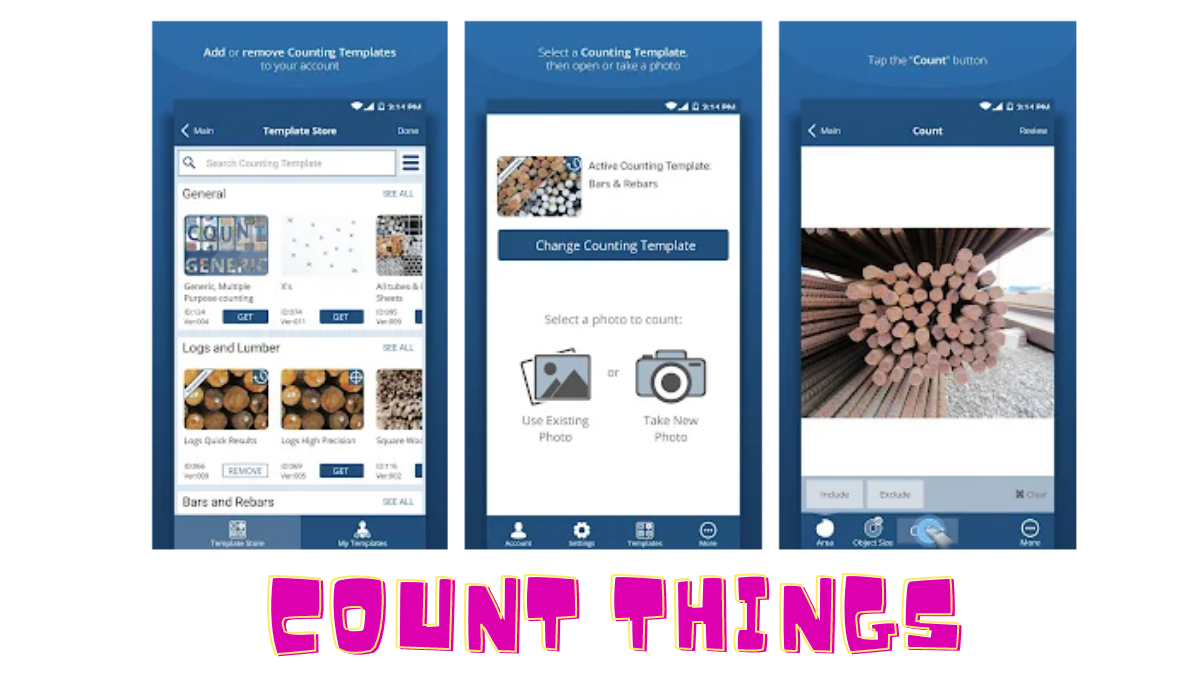

CountThings from Photos app helps businesses automate counting. Open the app, select the right Counting Template for your items, take a photo, and count.If we don’t...

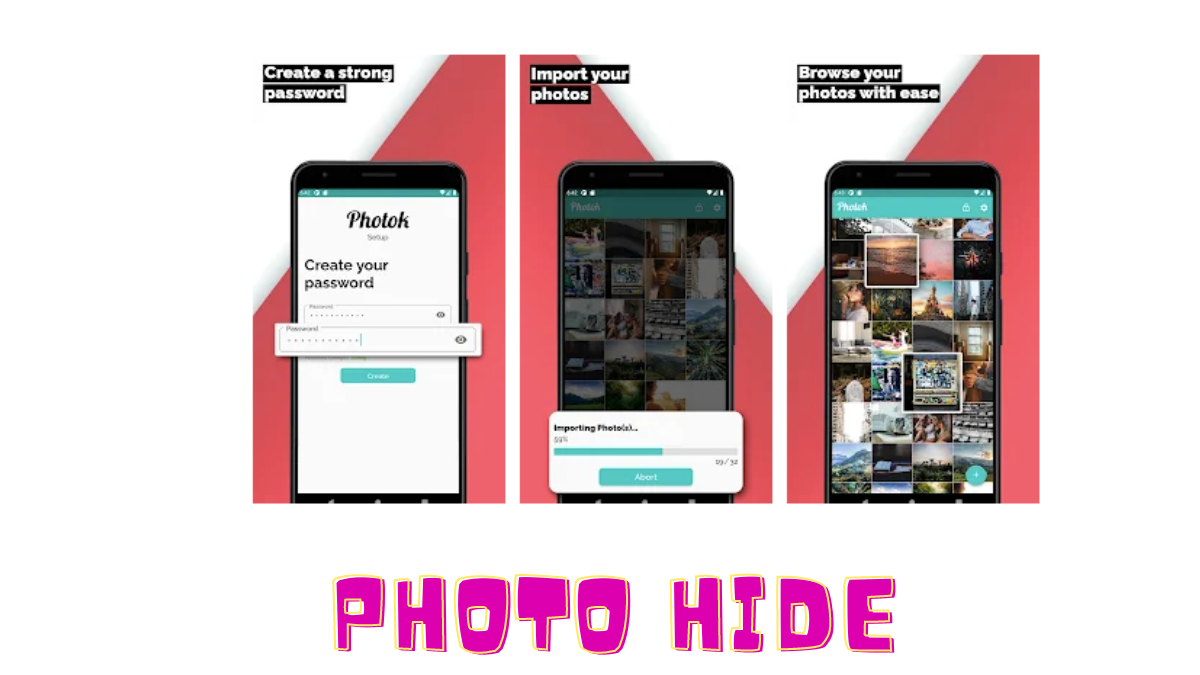

Some people on mobile want to hide their photos from others. However, there are many applications in the Play Store.But is it safe to hide photos...

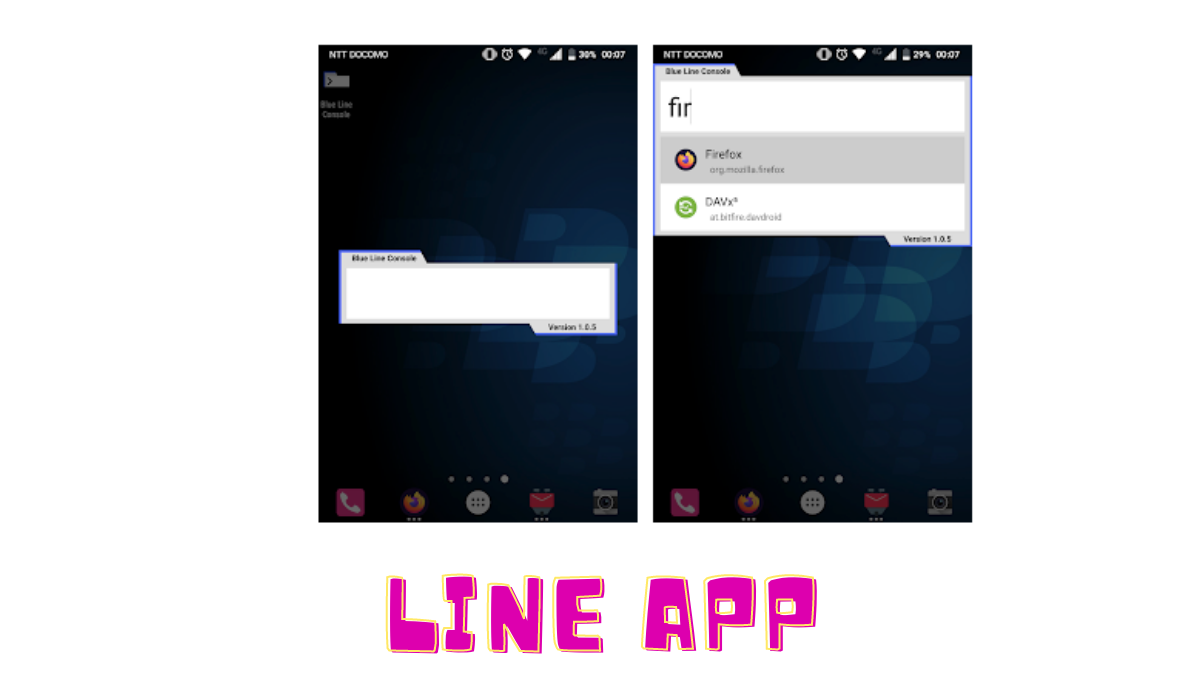

Everyone knows that there are so many applications in mobile.However, sometimes it becomes difficult to find certain applications.This little application works very well in such a...

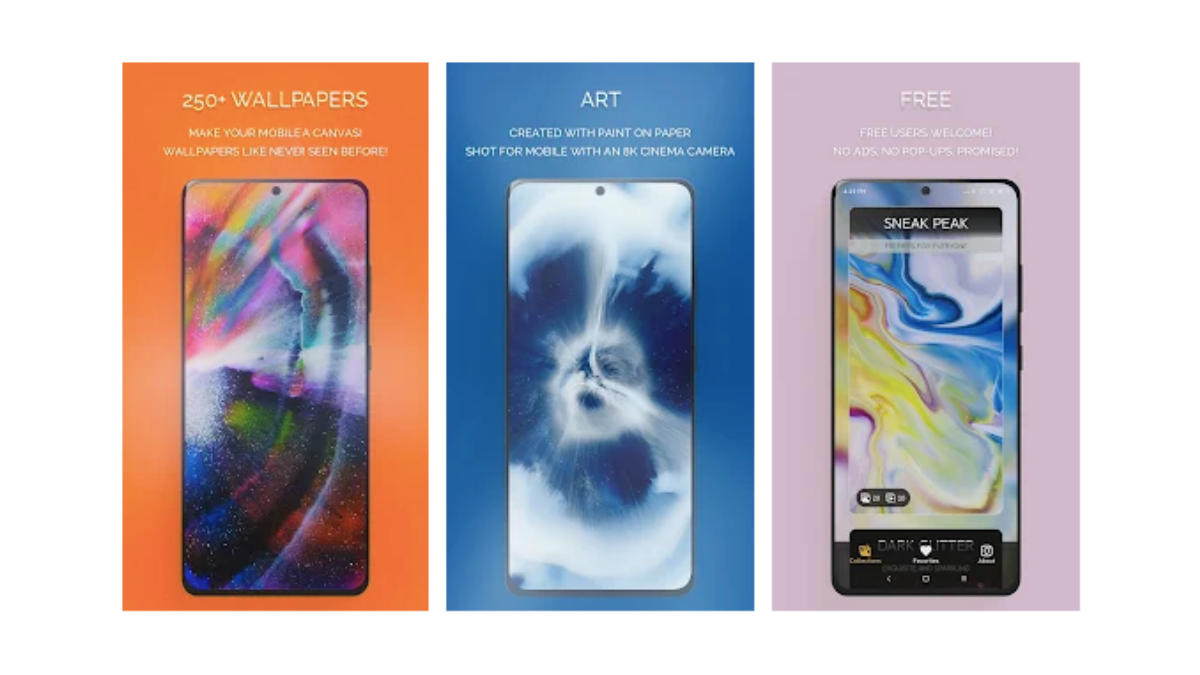

Normally everyone sets wallpapers on mobile. However, many types of applications are being downloaded for this purpose.But even then they are removing the ones that can’t...

Usually we need internet every day but for this we use WiFi with no mobile data.But one question that comes up here is how much data...

Usually copying anything text to me on an Android phone is not an easy task.Copying text on an Android phone is definitely a no-brainer.Some times refuse...